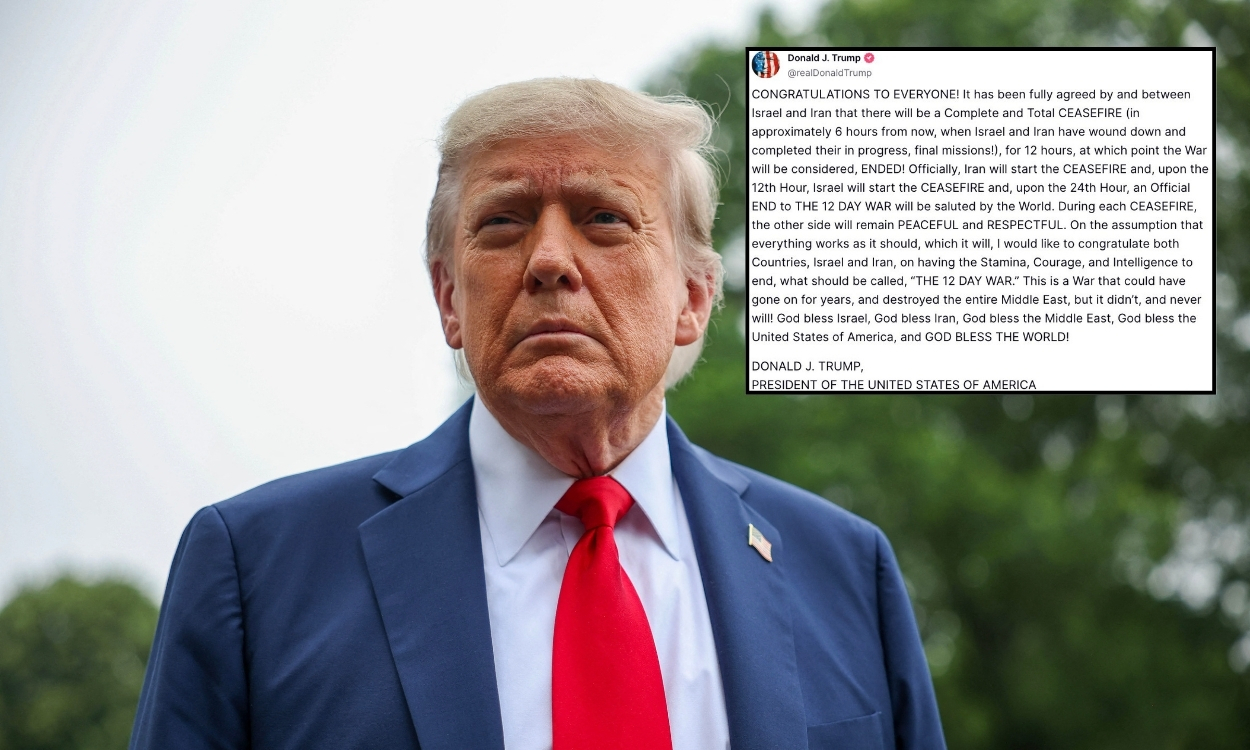

Trump-Musk kissing deepfake shown at HUD office raises disinformation concerns

A doctored video depicting Donald Trump kissing Elon Musk’s feet was shown at the HUD office in Washington, igniting controversy. The deepfake incident has intensified concerns over misinformation and its circulation within government spaces

A digitally altered video showing Donald Trump kissing Elon Musk’s feet appeared on a screen at the Department of Housing and Urban Development (HUD) office in Washington. The manipulated footage, reportedly displayed within the agency, has escalated debates on digitally altered content and its influence on public perception. While the clip’s origin remains unknown, its presence in a federal setting has drawn scrutiny from officials and experts.

The incident has renewed concerns over AI-generated misinformation, particularly when it infiltrates government spaces. Critics argue that fabricated material in official environments exposes weaknesses in media oversight. As synthetic media grow more sophisticated, fears mount over their power to distort reality and shape narratives, especially in politically charged situations.

How did the AI-fabricated video reach a federal workspace?

Initial investigations indicate that unconventional digital channels allowed the manipulated content to evade standard protections. Analysts suggest that clandestine online networks, adept at distributing falsified media, exploited system vulnerabilities, enabling the altered recording to spread unnoticed. Encrypted platforms and obscure sharing methods may have played a role in its unexpected emergence within an authority’s facility.

Further assessments reveal institutional lapses that may have inadvertently permitted this safety breach. Despite existing safeguards, deficiencies in surveillance protocols left room for the engineered material to infiltrate internal networks. Regulators are now evaluating both technological weak points and external actors to reinforce defenses against future incursions of deceptive media in high-security environments.

What policy and technological reforms could counter deepfake misinformation?

In light of recent events, public and private fields are reassessing strategies to combat digital deception. Efforts focus on proactive solutions, including advanced verification tools, standardized authentication procedures, and cross-sector collaboration. Experts advocate for AI-driven monitoring structures to detect manipulated publications before it shapes society perception.

Meanwhile, proponents of ethical artificial intelligence and digital transparency push for stronger oversight to regulate generative content. Proposed modifications seek a balance between innovation and accountability, ensuring compliance with strict guidelines. This coordinated attempt goals to fortify defenses against disinformation while preserving the advantages of emerging technologies.